Table of Contents

How Parkrun volunteers sort barcodes - a computer scientist's perspective

On the Christmas day of 2018, I volunteered at Norwich Parkrun. Towards the end, I ended up helping out with sorting the plastic barcodes. I find the whole process interesting. This is because sorting algorithm is an essential part of computer science curriculum 1). I do have a special connection to sorting algorithm - I bumped into Sir Charles Antony Richard Hoare FRS FREng 2)3), the inventor of quicksort algorithm 4), the day before I was formally taught quicksort for the second time 5) as a first year computer science undergrad in University of York.

Sorting numbers inside a computer is a bit different to sorting objects in physical world. This is mainly because the uniform cost model does not apply in the physical world 6). However some of the concepts in algorithm analysis can still be applied to physical world, for example time complexity and space complexity 7).

In this blog post, we analyse the algorithm which Parkrun volunteers use to sort barcodes, using some concepts from computer science. This blog post is written in such a way so you can follow it, even if you have not formally studied computer science.

In short, Parkrun uses bucket sort to sort their barcodes after each event. I do not think I have encountered an implementation of bucket sort on computers, yet I was treated with a real life implementation of bucket sort on Christmas day.

Analysis of algorithms

An algorithm is an unambiguous set of instruction to solve a certain class of problem. A class of problem may be solved by multiple distinctly different algorithms. Different algorithms may be designed for different situation. They may have different advantages and disadvantages.

For example, there are different ways of doing multiplication. One can perform multiplication by repeated addition. However, more commonly for human, multiplication involving two single-digit number is done by reciting the multiplication table (also known as the “times table”). This is because repeated addition can take a long time. For multiplying two numbers which have multiple digits, one might prefer to perform long multiplication, which itself involves reciting the multiplication table. These three methods of performing multiplication are equally valid. They are all examples of algorithm.

In human, multiplication by repeated addition takes such a long time, so it only tends to be used as a last ditch effort for primary school children to complete their exam - it is only used by pupils who cannot remember their multiplication table. In computers, multiplication by repeated addition can be easily implemented on by the use of a for-loop. Although this does not happen very often, as multiplication is supported by most hardware. Although long multiplication and reciting the multiplication table is popular amongst human, computers tend not to use that. Computers use the “shift and add” algorithm, partly because computers operate in binary 8).

Different algorithms have different “complexity”. The complexity of an algorithm describes how the algorithm behaves as the input size changes. There are two kind of complexities - time complexity and space complexity. Time complexity describes how the number of steps required by an algorithm scales, as the input size increases. The space complexity describes how the temporary storage space required by an algorithm scales, as the input size increases.

We describe algorithmic complexity using the “big O notation”. The letter O stands for “the order of the function”. So if one describes the time complexity of function $T$ as $T(n) = O(n^2)$, it means that for function $T$ to process $n$ inputs, the steps required scales in the order of $n^2$. In layman's term, for function $T$ to process $n$ inputs, it needs at least $n^2$ amount of steps. I know some computer scientists and mathematician think that the big O notation is effectively an abuse of mathematical notation, but this is literally how the world works. Oh wells.

The same notation can be applied for the (temporary) space requirement of an algorithm. You can think of this as the amount of scratch paper you need. For example, to do long multiplication, you need at least $3+n$ lines, $n$ being the number of digits of your shortest number. If you don't believe me, you can try it out yourself. The number $3$ comes from your two multiplicand and your results, the $n$ comes from each intermediate lines of your multiplication. This is assuming that you know about commutativity of multiplication, and you always put the longest multiplicand in your first line of your long multiplication! Of course in computers, this refer to the temporary storage space for your variable.

Cost models

When we analyse algorithms on a computer, we tend to use uniform cost model 9), this assumes that the time taken for certain fundamental operation is the same, for example, accessing an element of an array, addition, subtraction, multiplication, division and comparison.

The uniform cost model is certainly not true for human. A good example would be accessing an element of an array. For a computer, it makes no difference whether you are trying to access the 12th element of an array or the 112th element of an array. However, in the physical world, things are slightly different. First of all, we need to define what an “array” is in the physical world. It can be a table of numbers, or a stack of plate. Either way, there is going to be some distance between the 12th element of an array and the 112th element of an array. So accessing them is going to take different amount of time. This can be especially a problem if you did not label your elements, and you end up having to count from the first element of an array. (I suppose this can be a problem for computers too, if you decided to use linked list to implement your data structure.)

Bucket sort

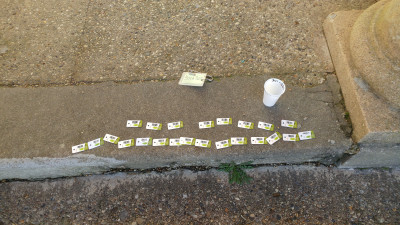

Having got the basics of algorithmic analysis out of the way, we can finally look at how Parkrun volunteers sort the barcodes. Parkrun volunteers use a physical implementation of bucket sort 10)11). This is illustrated by the following two pictures taken at Parkrun.

Bucket sort is a distribution sort - the original input is split into multiple substructure, where comparison sort is performed. It is effectively a two-stage sorting algorithm, how it works can be summarised in the following four steps:

- Initialise the empty buckets.

- Put each object into their corresponding bucket (stage 1).

- Sort each non-empty bucket using a comparison sort algorithm (stage 2).

- Visit each bucket in order and put all elements back into the original array.

On computers, bucket sort has the following properties:

- average time complexity $O(n+\frac{n^2}{k}+k)$,

- worst case time complexity: $O(n^2)$,

- worst case space complexity: $O(n \cdot k)$,

where $k$ is the number of buckets.

In Parkrun's particular implementation, the total number of buckets is not defined. Instead, each bucket is set to hold 25 barcodes. The total number of buckets depends on the amount of plastic barcodes. It is easier for human to sort individual bucket by limiting their size.

The advantage of Parkrun's two-stage sorting approach is that it converts the problem of sorting barcodes into an embarrassingly parallel problem 12), which is a type of workload which requires little or no effort to separate into parallel subtasks. Multithreading naturally occurs during the barcode sorting process.

Distributing barcodes into their respective buckets

The process of distributing barcodes to their respective buckets (stage 1) is a completely stateless process. Volunteers can join and exit the sorting process any time they way. The volunteer pool operates much like a thread pool 13). There is no hand-over process, each worker thread (volunteer) does not need to obtain any state information before joining the task (sorting), and it does not need to pass any state information to another worker thread when exiting. Stage 1 is completely thread safe 14). There is no information transfer between each worker thread. Stage 1 is also completely atomic 15), as the barcode can either be inside or outside its corresponding bucket.

In fact, I took advantage of the stateless and atomic nature of the stage 1 workload when I was helping out. My hands were quite cold, I was losing dexterity, so I exited the task. I then went and got a cup of hot chocolate to warm up my hands. I rejoined the task after my hands were warm.

The first stage establishes a partial ordering 16) of the original data structure (all of the barcodes) - there is ordering amongst the buckets, but there is no ordering within each bucket. To establish total ordering 17), each bucket need to be sorted using comparison sort.

Sorting individual buckets using insertion sort

Different worker threads (volunteers) tend to use different sorting algorithm. Personally I use insertion sort, which is a comparison sort.

Insertion sort is a simple - this is how it works:

- Get a list of unsorted items.

- Divide the unsorted items into two partitions - sorted and unsorted. Set a marker for the sorted section after the first item in the list. The first item is now marked sorted. The rest of the items are marked as unsorted.

- Repeat step 4 to 6 until the unsorted partition is empty.

- Select the first unsorted item.

- Swap this item to the sorted partition, until it arrives at the correct sorted position.

- Advance the marker, so the sorted partition increase its size by one, and the unsorted partition decrease its size by one.

On computers, insertion sort has the following property:

- best case time complexity $O(n)$

- average time complexity $O(n^2)$,

- worst case time complexity: $O(n^2)$,

- worst case space complexity: $O(n)$.

More information about insertion sort can be found at 18) and 19), they explain this topic far better than me.

There are also video animation of insertion sort on the Internet:

|

You might want to turn off the audio for this video. |

Leave the audio on for some Romanian folk dance music. |

As a human, I like insertion sort, because it allows me to know how far I have done. The only state information I need to track is the position of the partition marker. This can be done easily by holding the unsorted barcodes in my hand, and place the sorted barcodes on the floor.

However there are some physical optimisation which I made. I collapsed consecutive sorted barcodes into clusters. This is because physically inserting a barcode involves moving all the adjacent barcodes, which takes quite a bit of effort on a rough surface. The two figures below illustrate what I meant:

Preallocation of enough empty space for the missing barcodes should help with the problem as well - that way you don't need to shuffle those out-of-place barcodes.

Conclusion

I quite enjoyed participating barcode sorting. It is quite of interesting to observe a physical implementation of bucket sort. A lot of computer algorithms are inspired by real life processes. I think perhaps the bucket sort algorithm you learn from textbooks is the abstraction of real life bucket sort process. After all, Wikipedia does not say who came up with bucket sort 20). It really does make me think - a lot of assumptions in the physical world do not apply in computers, a lot of assumptions that work in computers do not apply in real life. If real life processes can inspire computer algorithms, then surely computer algorithms can inspire real life processes.

Further readings

There are other topics which link computer science into the way the physical world works, for example operational research 21). There was a time which all computation were performed by physical objects, to explore this topic, have a look at analogue computers 22). Finally, there have been works on explaining the limitation of computation using physical phenomenons, to explore this topic, have a look at 23) and 24)